AI-assisted coding is the single most transformative toolset I have ever witnessed in my lifetime. I love it and I hate it at the same time.

I can distinctly remember the first time I used the smart autocomplete after installing Github Copilot. I was writing a function, and I began with a quick comment and I suddenly saw the absolutely correct snippet materialize. I felt like something reached into my skull and ever so gently, nudged me along to the idea I was in the middle of explicating. Poof, here's your code.

Since then, I've spent probably just under two years messing around with ai-assistive, and now agentic coding tools. I was using Claude Code before it was cool, and probably 90 percent plus of the code I generate at work and in my free time is through these tools.

But, I've been having a really uncomfortable feeling developing with these tools, and I know I'm not alone. It's not quite the feeling of job replacement; I've seen enough garbage code being generated by agentic tools to be unconcerned, although aware. It's the unnerving feeling of writing, shipping, and attesting to code that I know very little about.

Take my website, for example.

Me working at one of my favorite coffeeshops, usually on a side project

I have a personal goal of learning web development over the next year or so. I also wanted to have a place on the web of my own, and a mentor of mine (shoutout Zack!) told me a great way to start would be to mess around and make my own website. I did so about a year ago, and I decided to use agentic tools to do it so I could learn more about applying and prompting these tools while I learn. I succeeded! I have a somewhat functioning website with loads of functionality, an easy way for me to queue up new blog posts and ship, and even my own search engine over my portfolio data. It mostly works, and it was surprisingly easy to throw together.

The problem? I still don't know how to code Javascript, how React/Next.JS works, or how web app frameworks work generally. I basically know how to manage my own website, but I do it entirely through Claude Code. Yes, I've learned a few things for sure, but not as much as you think.

While my website was a success, there were many vibe-coded projects I had to throw away to get there. For a solid couple months, I got it in my head to make some automations, scripting and eventually a package to manage my ever-expanding portfolio content. I called it DevRel Deck, and I vibecoded a bunch of processors, chunkers and ingestion mechanism to easily grab new content I made, and render it indexable on my portfolio. It worked initially, but as the months progressed and complexity grew, I lost context of how the programs actually worked. I had to throw the whole damn repo away and start from scratch.

Now you might think, is that so wrong? If I can quickly identify and triage issues with tooling, and maintain my website, where's the harm? After all, most of us get paid to produce outcomes, not necessarily code in a certain way or proportionate to our level of coding capability. There are two main reasons that make this an issue: vibecoding produces high volume of code that becomes tricky to maintain and parse, and it can get in the way of learning the very concepts you need to do the prior. In other words, it seems vibecoding amplifies coding throughput, but gets in the way of durable skill formation.

And you might think, what's the big deal? Just get the job done and clock out.

Look, I got something to confess. I actually enjoy learning, okay? It's one of my secrets of being able to pivot so many times in my career.

It's fun. I went to the kinda school that only makes sense to go to if you really like learning for the love of the game type shit. I once bought a stats textbook for fun, after I was done with the course I needed it for. That kinda love of learning, okay!

So when I use coding agents I feel accomplished, but malnourished.

After the third or fourth time quickly generating code I couldn't parse, I decided to look around for some solutions.

I came across a peculiar research paper published by Anthropic scientists on this very area.

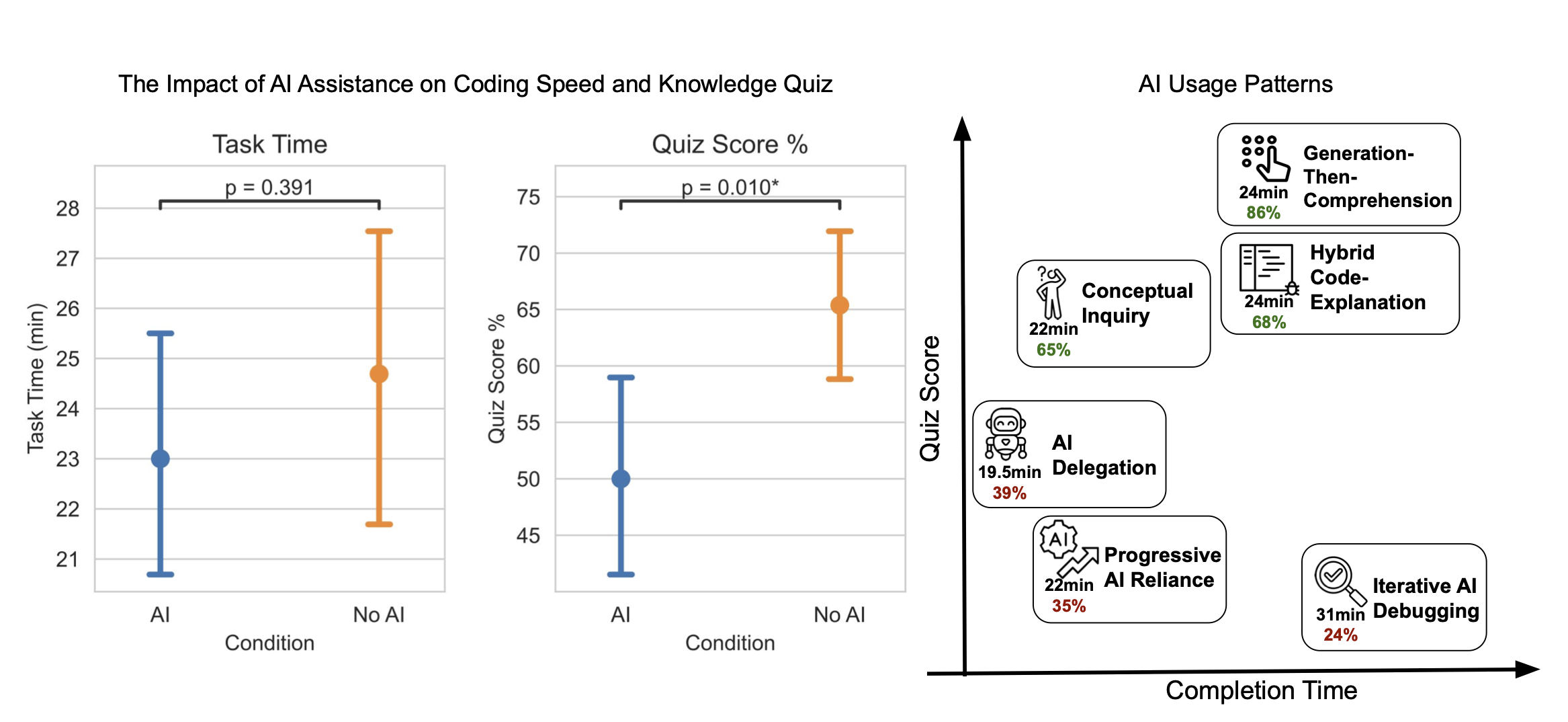

Key graphic from the skill formation paper on task time, quiz scores, and successful behavioral patterns exhibited by stellar students

How AI Impacts Skill Formation by Judy Shen and Alex Tamkin is a clean assessment on whether chatbots can impact how developers learn new things. There are a ton of great insights in this paper; I encourage you to read it in full as it is short and approachable, but I'll summarize the premise and takeaways quickly here.

In the paper, Shen and Tamkin insightfully remind us that supervision and analysis will become an important capability of developers who leverage agentic tooling, but that that very supervision capability is vulnerable to eroding when using that tool. This is known as a consequence of cognitive offloading.

There are a few ideas useful to understand how people perform at tasks when using these tools. It's unclear at this point whether AI tools can or cannot positively impact performance, as there are mixed results in many cases. A metaphor introduced by the Wiles paper is handy, it describes AI tools as exoskeletons, devices that enable certain tasks to become possible when worn, but you are no stronger or more competent after taking one off.

However, up to this point, there wasn't any research that could cleanly inquire about this exact situation: If you are tasked to learn a new library at work, and you use an agentic tool, can you evaluate the code generated by that tool?

Well, that's exactly the assessment the researchers ran with some programmers, using an async Python library called Trio. They asked developers under different conditions (No AI, and AI), and they found that both cohorts completed the tasks using roughly the same amount of time, but that the AI group performed significantly worse on a quiz given after using the library to complete said tasks! And we aren't talking just a few percentage points, we're talking the equivalent of 17 percent or two letter grades worse. Ouch!

What's great about this paper is that the researchers also covered what types of behaviors with the chatbots that successful developers exhibited, across the no AI and AI groups. They found that developers that exhibited three different behavioral patterns tended to score higher in quizzes and in some cases, faster in completion time than those that didn't.

The three behaviors were as follows:

- Generations-then-comprehension, where devs asked the chatbot to make code and then asked repeated questions about how the code worked

- Conceptual Inquiry, situations where the devs only asked how the code or concepts worked in general

- Hybrid Code Explanation, queries that generally included asks around code gen and explanation

And on face, these make sense. All of these reflect engagement with the material being learned in the form of inquiry, and therefore learning and skill acquisition. If instead you let the AI make the code itself, and then run it, you end up not really learning much.

Now the eagle eyed quick readers here will notice a few flaws, one being that the research paper evaluated chatbots and not agentic tools; i.e the developers were forced in some cases to copy/paste the code being generated, instead of accepting/declining generations from the agent directly. But this has to be worse, no? If you ask an agent a thing, and it's capable of completing the task, what are the chances you learn better than this?

I know, I know I hear the objections already. This doesn't matter, you say! Understanding of the code will purposely be offloaded to the agent (swarm) so you can do more important things! Feel the AGI!!!

Look. I dislike this take because I hear it most often from two cohorts: extremely experienced engineers who can only benefit from offloading work to these tools, as they already know what they need to, or from wannabe startup founders yoloing into their nearest YC-like cohort with unlimited compute tokens. I almost never hear it from the group of people, who arguably will be the largest group using these tools: novice and intermediate developers who are either learning these concepts for the first time, or are maturing into deployment environments where they encounter new, more complicated projects.

Who better than me to make this argument, a recovering data scientist, mostly self taught programmer, and a quick learner. I revel in using agentic tools like Claude Code to do things and build cool stuff, but I simply don't know enough valuable software engineering principles to be able to beat the design and capabilities that Claude Code can make. And for the same exact reasons that it gets annoying to pick up a project after spending millions of tokens vibe coding on it, it will become increasingly challenging for people like me to maintain and develop new skills without depending on these assistive tools.

So I've decided to do something about it, if not for everyone, for myself. The above research paper is narrow but clear: conceptual engagement tied with assessments while coding has a positive impact on acquiring new skills. To me, this seems like a rather low-risk bet; create more opportunities to ask questions and assess understanding, and skill acquisition will come.

I've started to build a few tools to do this exact thing while I vibe-code on my projects, and I'm seeing some good results. My first iteration was a quiz-me skill that simply generated questions across my codebase and quizzed me. I actually learned more from a few engagements with this tool, than I had vibecoding the entire app I was building!

If this is a problem you've felt, I definitely wanna hear from you. Keep an eye on my newsletter for more updates as I work; I hope to release my findings along the way. And maybe, I'll finally get around to learning some webdev.

Join Answering Machines

Thoughts, reflections, tinkering and whimsy around AI, modern tech, the world, and all that comes with it.

I respect your inbox. Unsubscribe at any time.